Synphony

Mixed reality experience

Academic Research Project

Digital Direction MA Final Project at the Royal College of Art

My Role & Tools Used

Research & Design

Conception

Art Direction (Photoshop, After Effects)

3D Generalist (3ds Max, SketchUp)

Game Design, Prototyping & Development (Unity 3D with SteamVR and VFX Graph, C#)

UX/UI Design (Figma, Illustrator)

Physical Prototyping (3D print, physical computing)

Machine Learning (Python, scikit-learn and Pure Data)

Music Production (Ableton)

Date

2019-2020

What it is

Synphony is an experimental mixed reality interactive system that augments the experience of playing instruments with colours.

Inspired by the naturally-occurring phenomenon of synaesthesia, Synphony gives participants the superpower of seeing music in a new way, providing a playground for a creative exploration of harmonies using immersive visuals.

The experience introduces beginners to the wonders of musical improvisation by facilitating the first steps and expands the cross-modal perception of music in advanced musicians.

Context

Conventional music education – even the one addressing young children – follows strict rules and largely relies on music theory rather than hands-on play, which oftentimes results in participants’ discouragement from further pursuit. Furthermore, communication between multiple musicians during improvisation sessions is usually based on verbal communication which is not necessarily the most adequate to the experiential character of practicing music.

The Synphony project addresses these problems by attempting to replace the conventional music notation system with colours.

Photo by Vivek Menon

Synphony being tested as a music education game in the Education Mode by a beginner.

The project also addresses the problem of how technology tends to alienate us from one another more and more. Synphony encourages co-creation of music with multiple people by catalysing a new musical conversation and collaboration.

So far, in its early form, the project has been tested by two musicians playing simultaneously on the guitar and the piano, but the ultimate goal is to increase the number of instruments to even accommodate a symphony orchestra of up to a hundred musicians. I can imagine a concert where each musician releases a colourful visual representation of the played tone, key, timbre and velocity that subsequently merges with the rest to create a poetic but also informative visual interpretation like straight from Fantasia, visible in space to both the musicians as well as the audience.

Group improvisation session in the Jam Session Mode.

Advanced pianist testing the Playground Mode by improvising and letting himself get carried to suggested “neighbouring” tonalities by the system.

In its current form, the project utilises a pair of DIY AR glasses through which musicians can enjoy the spatial holograms. Harmonies, individual notes and the key are conveyed through colourful volumetric visuals through which the musicians communicate with each other as well as with the audience through an additional screen.

The colours are related to one another based on the harmonies they create (the closer the colours, the more consonant the resulting sound combination).

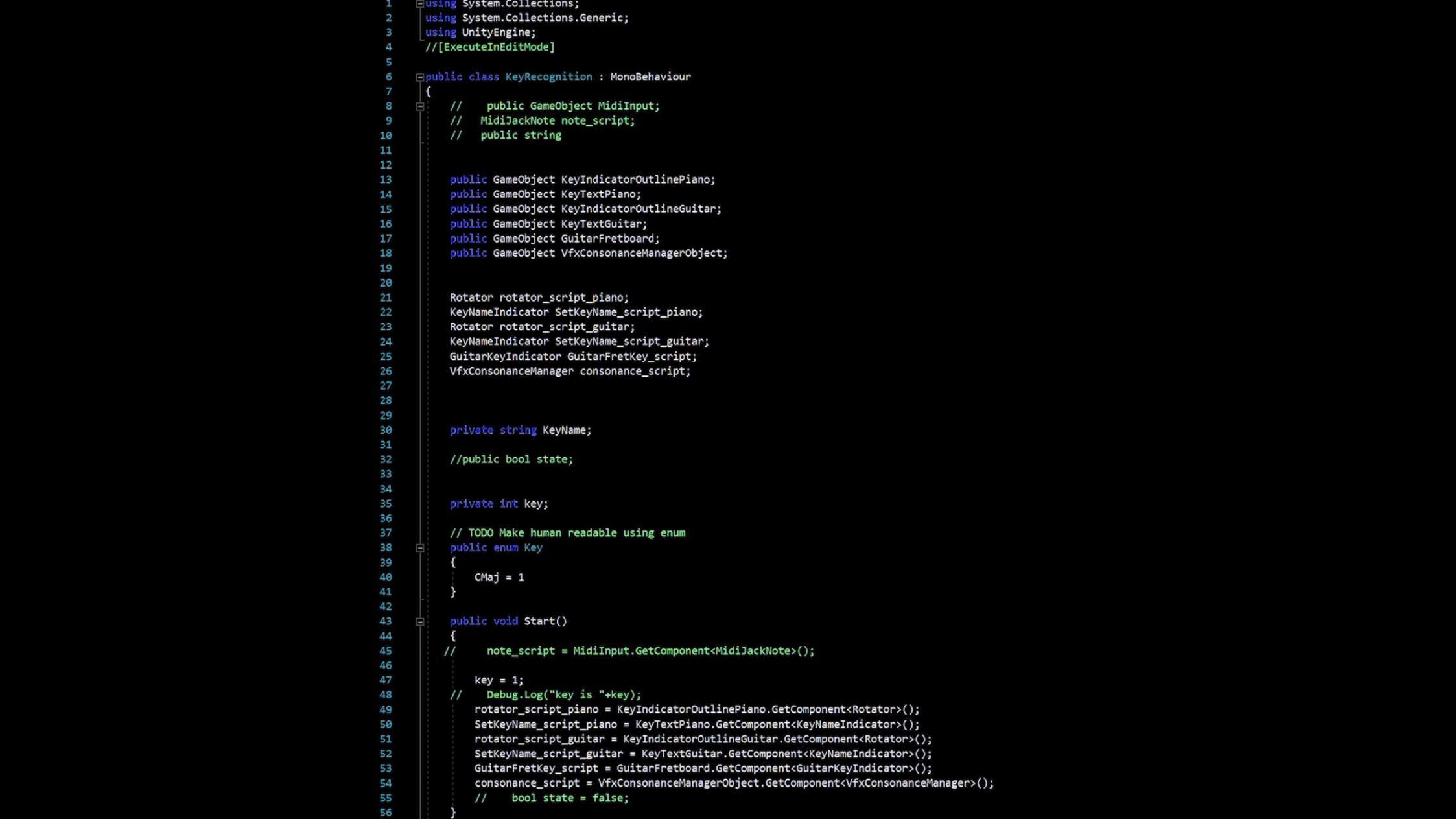

There is also an interactive key recognition script of my invention that picks up the tonality in which the musician is playing in real-time. The system’s awareness of the key enables interesting ways of playing with the visual representation of consonance and dissonance, as well as of assisting the musician in harmony exploration through an intuitive UI indicating creative possibilities.

Different Modes

The experience can be used in four different ways: alone – in the Playground and Education modes, and together (to avoid alienation, encouraging co-creation) – in a Jam Session and Show mode. Each mode gives a taste of a different possible use of a fully developed product:

- The Playground mode is a powerful composition aid for professionals – it assists with composing by suggesting the creative possibilities based on a tonality in which the musician is playing.

- The Education mode conveys the laws of harmony as well as the music theory in an intuitive and visually compelling way for beginners, thus avoiding discouraging the students with the conventional notation system. In this mode, the user is guided through interactive lessons step by step with the assistance of a narrator. The aim is to instill a musical intuition built through colour associations.

- The Jam Session mode forms a catalyst for a musical conversation between multiple players, helping the improviser stay in tune by picking up the key imposed by the leader.

- The Show mode generates interactive concert visualisations for the audience in real-time, visible from their perspective. Each musician releases a colourful visual representation of the played tone, key, timbre and velocity that subsequently merges with the rest to create a poetic but also an informative visual interpretation.

The Process

The Creative/Artistic

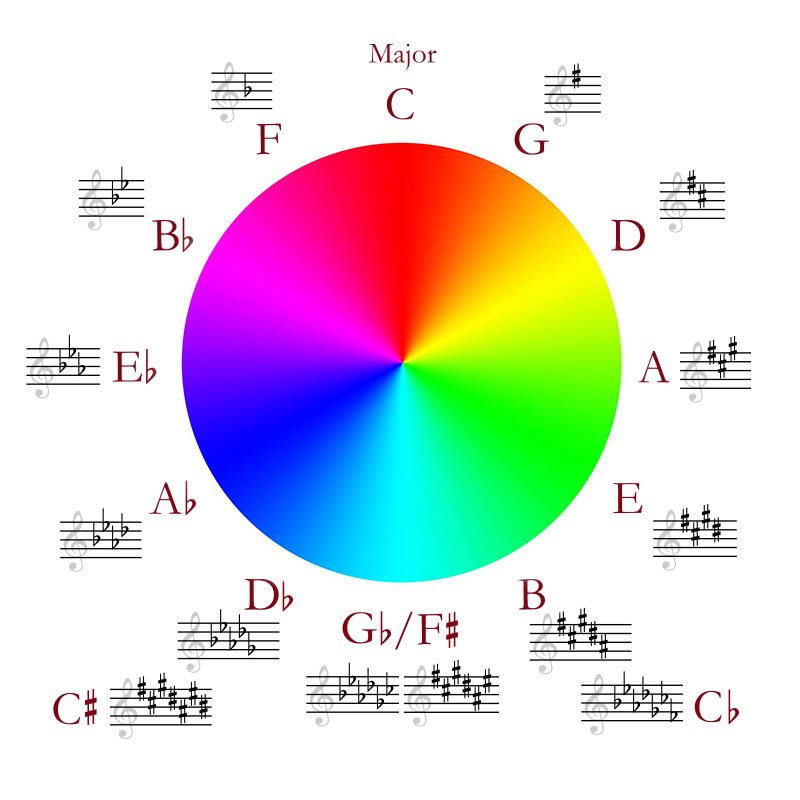

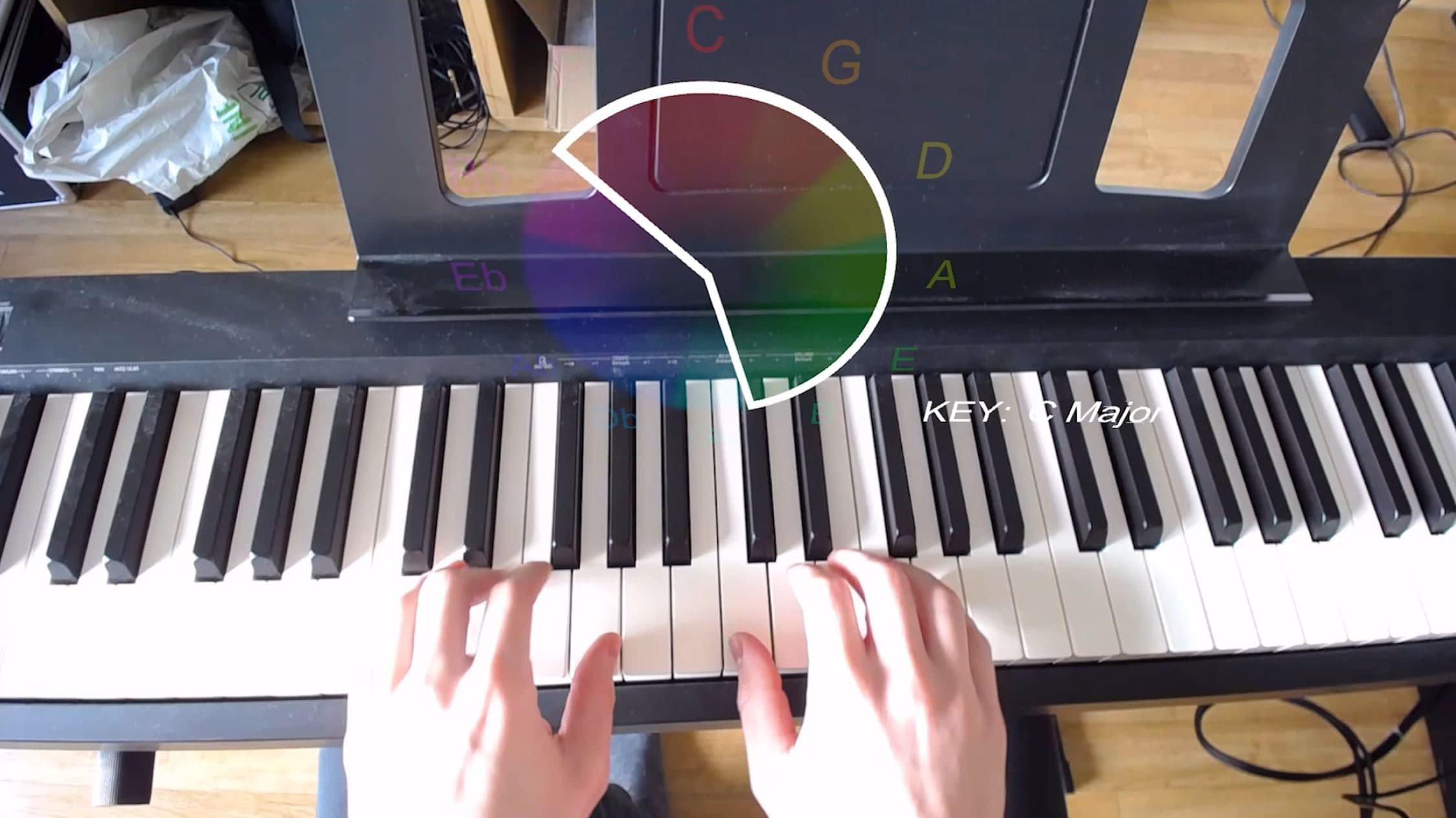

After extensive research on synaesthesia, previous use of colour in music as well as colour and music theory, I have colour-coded the western music system in a way that conveys harmonic relations through colour similarities. Subsequently, I created a UI based on the circle of the fifths for intuitive navigation through harmonies and keys that was followed by weeks of user-testing, experimentation and prototyping.

Inspired by the ideas of flowing waves, chords and dissonance/consonance, I created particle visualisations in VFX graph that flow in space and merge together in vortexes or get distorted and separated if played dissonantly. I also wrote the narrative and composed & produced the backing music for the Education mode (explained in section below).

The Technical

The project in its current form uses a custom-made MR headset tracked spatially with HTC Vive’s tweaked tracking solution, Leap Motion hand-tracking solution for getting UI input, a monitor dedicated for the audience displaying what the user is seeing in real-time, the instruments (also tracked), and a powerful PC.

The experimental headset used in this project has been custom-built with the help of Smart Prototyping, following the North Star project blueprints open sourced by Leap Motion. The reason I did not use any off-the-shelves headsets such as Hololens or Magic Leap was because of the North Star’s superior field of view (more bottom coverage especially useful for guitarists).

On the software side, the project was built in Unity. I calibrated and fine-tuned the AR rig to make sure everything is displayed in the right place and scale (huge challenge with the experimental hardware combined with imperfect tracking precision of the Vive, especially while working with instruments where precision is crucial), introduced a multi-instrument MIDI support (customised JackMIDI by Keijiro from GitHub), created VFX Graph particle visualisations, wrote around 30 C# scripts ranging from game managers to interaction managers, including a proprietary ‘intelligent’ key recognition script that guesses what tonality the musician is in and implemented a Machine Learning solution that recognises guitar notes polyphonically from audio in real-time (using Python with scikit-learning and Pure Data).

The Process Video

Research

recap

“Fragment 2 For Composition VII” by Wassiily Kandinsky who was a synaesthete.

There is a lot of synaesthesia-themed art nowadays, where the synaesthetes merge sound and visuals trying to convey how they see music.

Wassily Kandinsky, being one of them, saw music and colour as inextricably tied to one another.

Melissa McCraken paints the way she sees known songs.

Those artists convey their own subjective way of seeing music.

I wanted to make this magic of seeing music accessible to everybody, so that everybody could relate to it.

That’s what Fantasia has achieved with a lot of success in 1940 and 2000.

The cross-modal marriage of colour, form and music that is universally relatable is very appealing to people.

I also think the experience of listening to and making music is so ineffable that it can’t be put into words, therefore I thought colour and form could be more appropriate to convey the qualia of music experientially, avoiding verbal communication.

According to Michel Chion, the right combination of sound and visuals have the power to create a gestalt effect in the user, where they become unified.

I tried to leverage this phenomenon of synchresis to help the user achieve a higher level of perception.

Synphony addresses the future of storytelling by letting the user co-create a non-linear narrative with a machine.

Steve Dixon considers that as the highest level of interaction and as the Holy Grail of interactive storytelling.

I let the user go through their internal journey of creative exploration of music and let them explore their own visual atmosphere around music. Individually or in a group.

“As long as I can remember, I have always used colours to remember music. It has given me a good idea for music, which benefited me, of course. If people asked me how I could play pieces by heart after hearing them three times, I answered that I remembered the colours.[…]“

– Dorine Diemer – student at the Amsterdam Academy of Music

What really struck me was how often I found synaesthetes’ claims saying that synaesthesia is helpful in accomplishing certain tasks.

That gave me an idea to look for a consistent colour code that I could apply to musical notes also for practical reasons, such as education.

Overlaying a chromatic circle on top of the circle of the fifths exposed fascinating patterns within Western music.

For example, the idea of consonance and dissonance in music can be applied to colour science.

There have been many attempts throughout the last centuries to colour code music.

Isaac Newton was the first one to realise that we perceive colour in a similar way to sound – both are only inaudible and colourless waves until they reach a brain that maps them to qualities such as pitch and colour.

Out of all these different colour codes, the closest to mine is that of Alexander Scriabin, a famous Russian composer who also might have been a synaesthete.

He was particularly interested in the psychological effects on the audience when they experienced sound and colour simultaneously.

His theory was that when a colour was perceived with the correct matching sound, “a powerful psychological resonator for the listener” would be created.

He applied his colours only to tonalities, not individual notes, and displayed them on a “light organ” dedicated to the visuals.

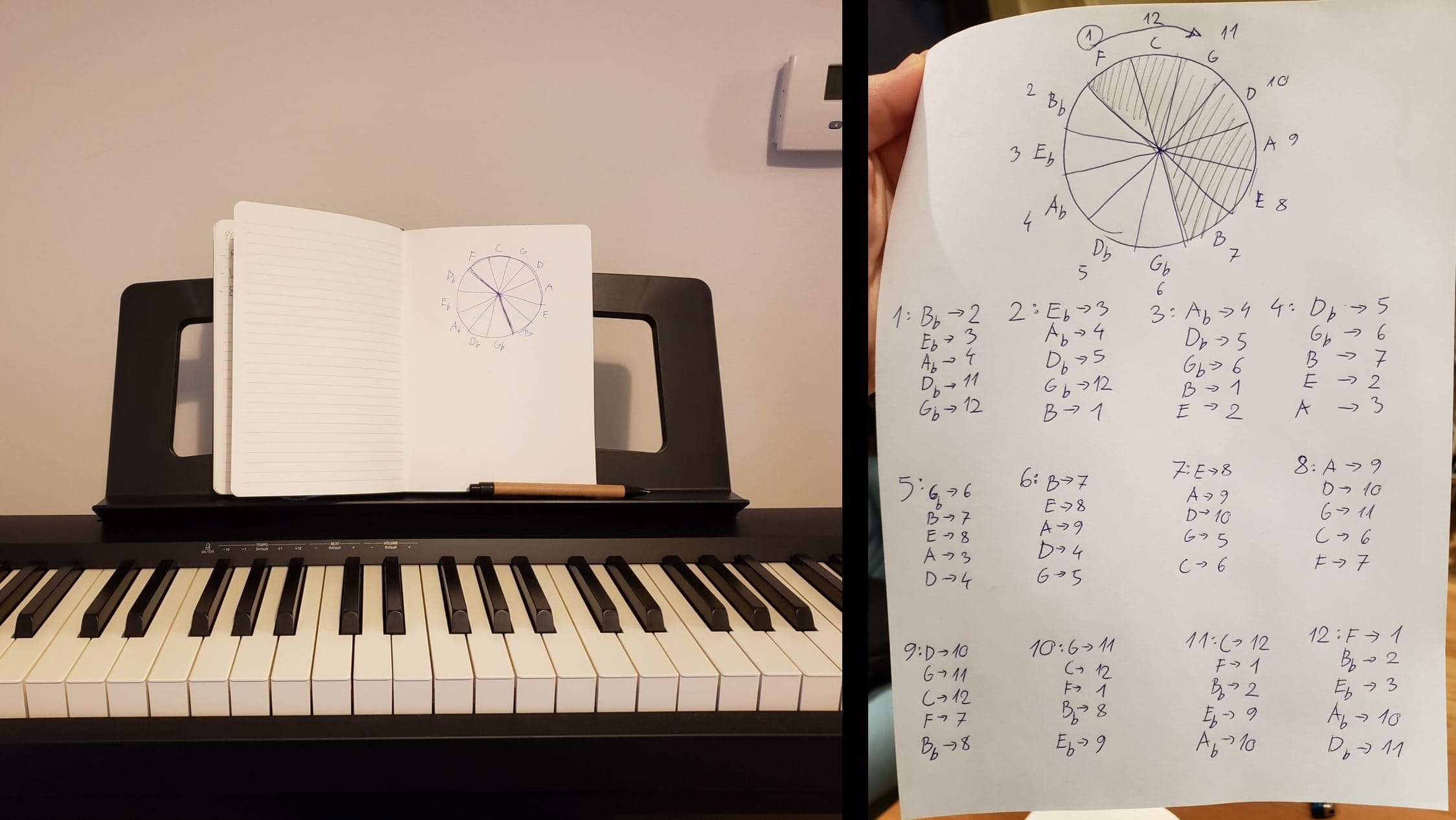

To explore the idea of representing different tonalities, I analysed how in existing Western music as well as improvisation techniques transitioning from one tonality to another happens. I’ve written down all the possible transitions from every possible starting point and transcribed everything into 800 lines of if statements and switches in C#, creating an interactive key recognition script in Unity.

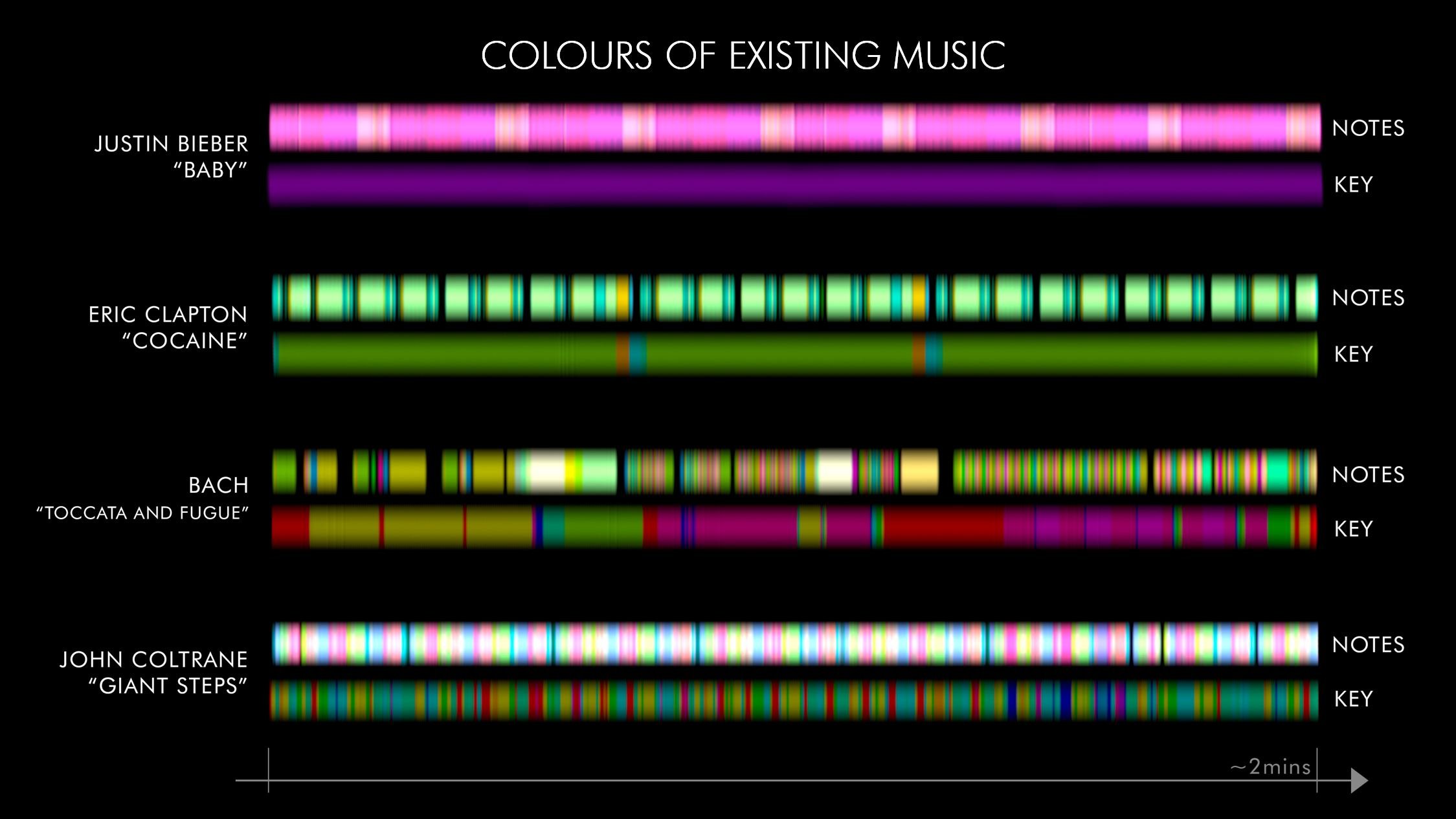

To see if my colour coding can reveal some interesting patterns within existing music, I analysed some known pieces of music from across different genres and visualized them on a timeline.

It is interesting how visible the differences between harmonic complexities between different music styles become with colours.

Justin Bieber’s hit “Baby” relies only on one key and it’s visible how its notes are not harmonically varied.

Eric Clapton’s Cocaine is a classic rock song also written in one key which makes it easy to improvise with.

Bach’s Toccata and Fugue now clearly shows its complexity. It is interesting to see almost opposite colours occurring right next to each other within the notes.

John Coltrane’s “Giant Steps” might be the most feared jazz piece for the musicians to improvise with, and the colour analysis clearly demonstrates why. This piece is a result of his study of the Circle of the Fifths where he tried jumping all across it from one tonality to another.

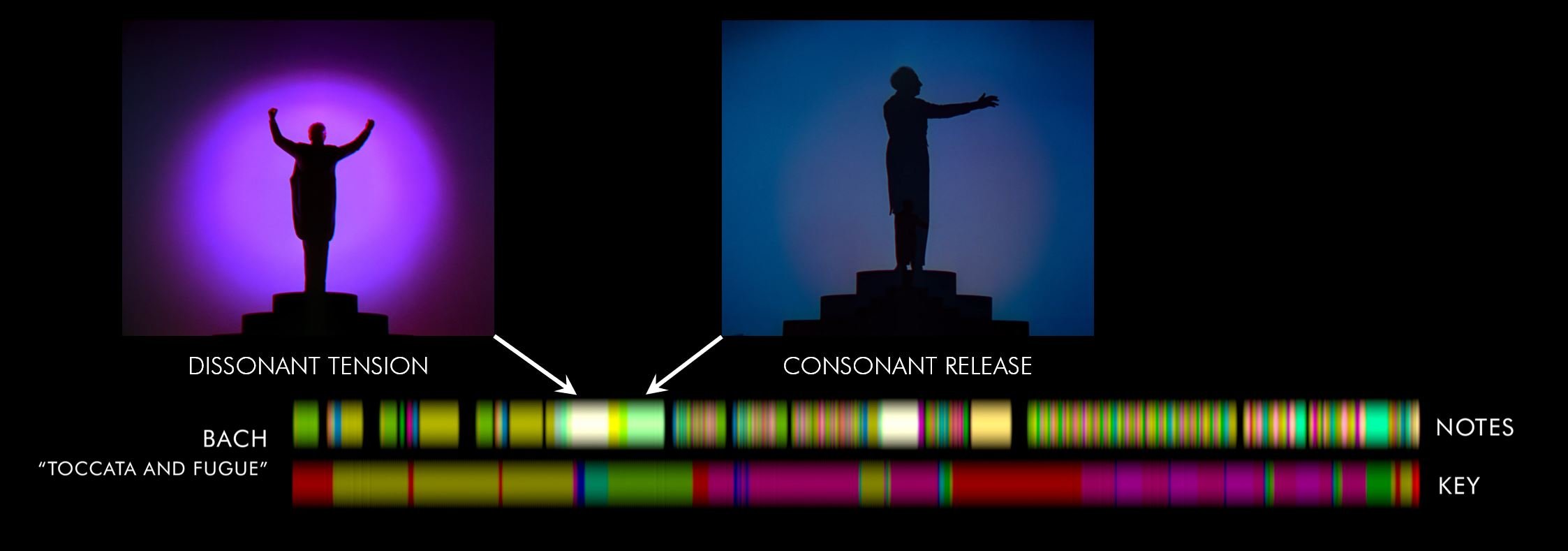

Let’s just get back to Bach for one moment.

Remember those moments of tension where all the dissonant sounds build up and then resolve into relieving consonant chords?

Fantasia has conveyed them with a dramatic sharp colour that was followed by calming blue.

My coloured notes portray the moment of suspense as a white patch because all the dissonant notes desaturated each other, but then it’s followed by an accordant yellow-green saturated release which is calming to our ears.

Once I already had the underlying technical foundation, I started working on more captivating and immersive aesthetic to convey the visuals in a compelling and entertaining way.

Also, I wanted to avoid isolation and instead create a sense of togetherness. It now works on the piano and guitar but my aim is for it to be used on all the instruments, individually or together in a concert.

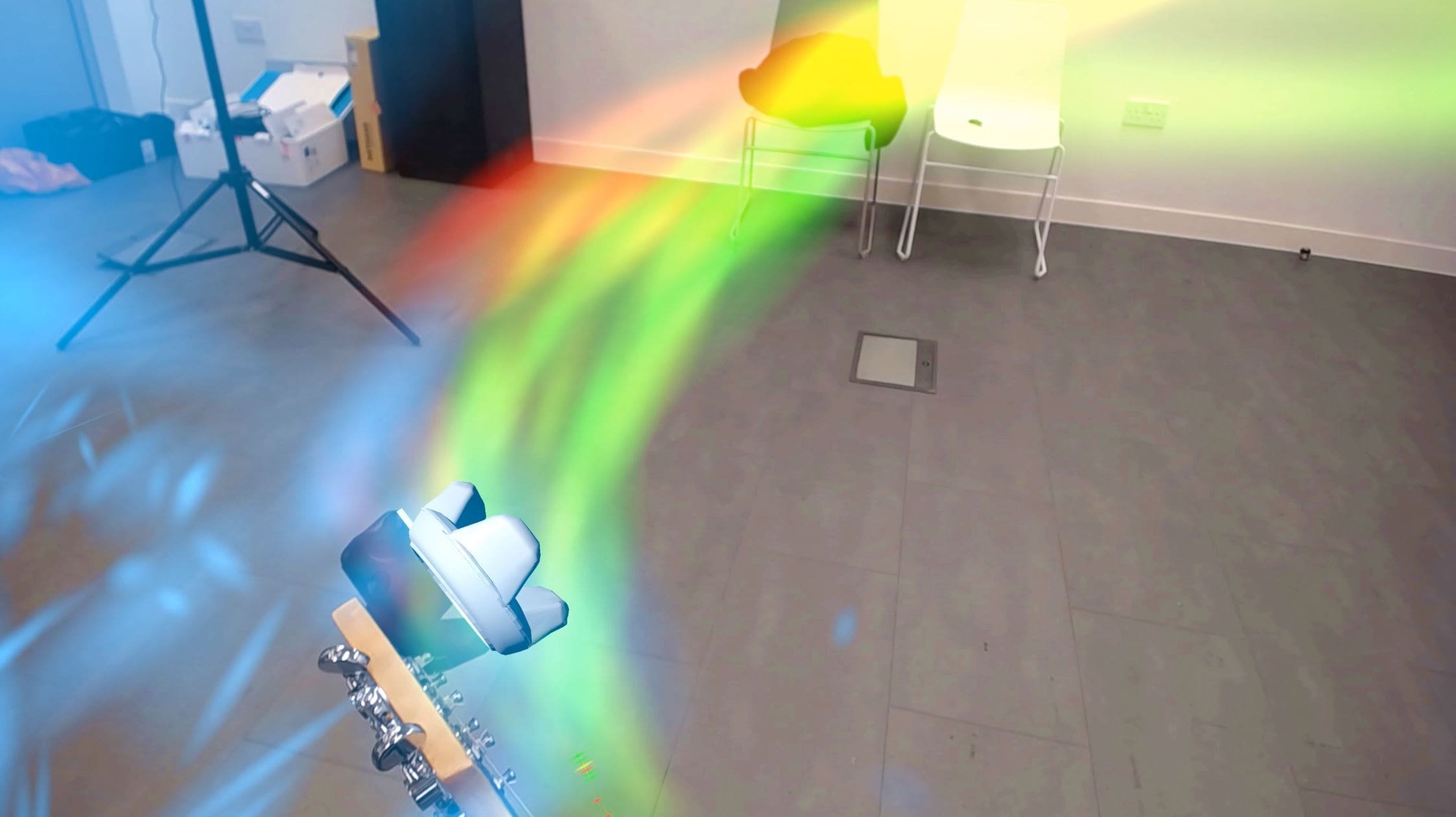

Here we can see how differently Synphony portrays dissonance and consonance (on the left it’s distorted blue that doesn’t belong to the tonality of “warm” coloured notes so it doesn’t merge with the fluid consonant right side)

The dissonance in music may be a result of a mistake, but very often is intended through using blue notes for example.

That’s why I wanted to communicate the dissonance in a non-binary way that is open to interpretation.

Once I have developed my “colour engine”, I spent weeks testing the system improvising on the piano.

With the real-time response of my colour wheel, I started noticing so much more about the music I played from memory that I’ve never noticed before. It helped me understand improvisation better.

“[…] Unlike my fellow students, I don’t need to remember names of notes or keys, but only colour combinations. And as I perceive the colours always vaguely projected on the keyboard, it is not so difficult to reproduce the music. […] Each piece has its own colour profile for me. How are other people able to tell things apart?”

– Dorine Diemer

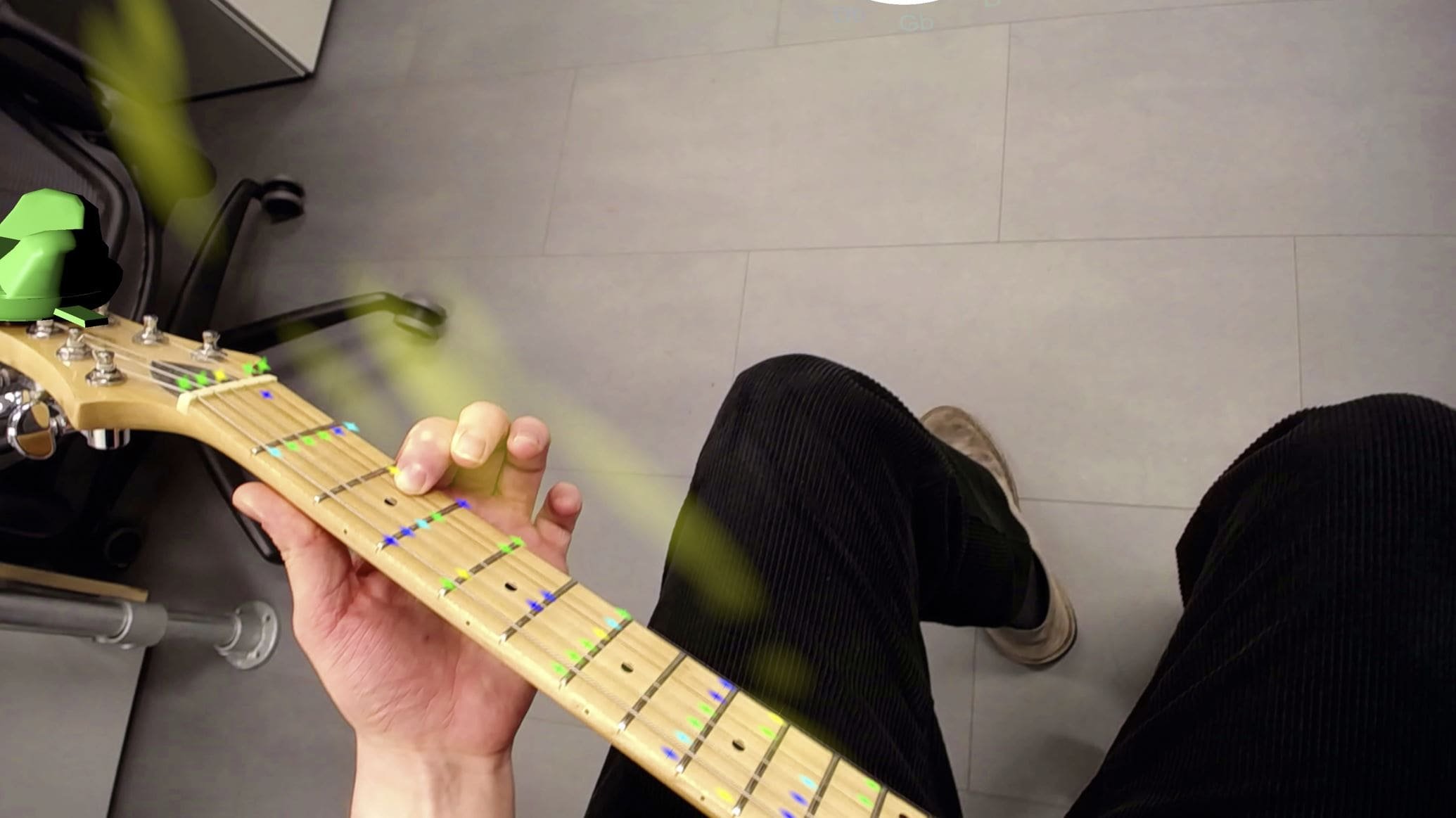

In addition to showing the colours through spatial volumetric visuals on my final piece, the system projects the colourful instructions directly onto an instrument.

The tonality recognition is in charge of deciding what notes to highlight.

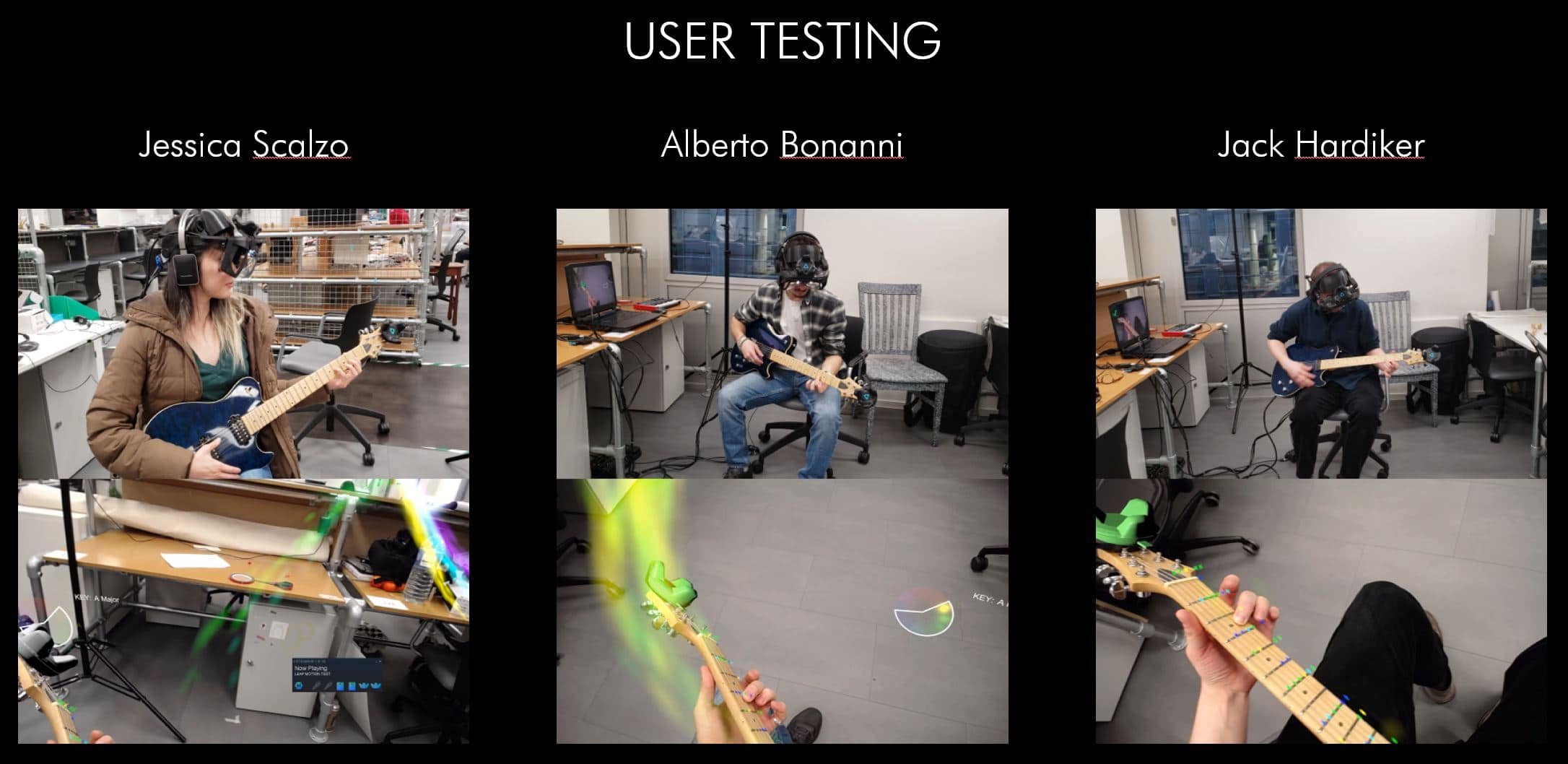

I finally managed to start the user testing recently to gather feedback from the others.

Both Jessica and Alberto hadn’t had any previous experience and it showed me that the first steps are truly difficult and more instructions will be needed in the future.

On the other hand, Jack had held the guitar a few times before therefore he knew the basics of how to play a sound.

Within two minutes he understood the colour-based instructions and was able to improvise in the right tonality along with Eric Clapton’s Cocaine.

The future for Synphony

Eventually, I would like to develop an app that can be obtained by everybody with access to an AR headset once they become more mainstream and lightweight in the next few years.

During the process of developing that app, I’d be really interested in practical research on how such a device/application could affect people in terms of learning efficiency and expanding the sensitivity to music.

Possible applications

- Personal entertainment/education:my main aim is to try to create an app gamifying music making and learning for everybody – education through entertainment. Research suggests that children who are taught music aided with colour learn way faster and find it more pleasant. It might generally facilitate music learning for everybody who’s visually under responsive, has short attention span or is even deaf;

- Music therapy: musical improvisation is already proven to be very successful;

- Concert visualisation: let’s imagine Fantasia-inspired visuals generated by the instruments live on stage.

References:

van Campen, C. (2007). The Hidden Sense: Synesthesia in Art and Science. Cambridge, Massachusetts : The MIT Press.

Harrison, J. (2001). Synaesthesia : the strangest thing. Oxford : Oxford University Press.

Dixon, S. (2007). Digital performance: a history of new media in theater, dance, performance art, and installation. Cambridge, Massachusetts : The MIT Press.

Chion, M. (1994). Audio-vision: sound on screen. New York : Columbia University Press.

Levitin, D. (2008). This is your brain on music : understanding a human obsession. London : Atlantic Books.

Brougher, K. (2005). Visual music: synaesthesia in art and music since 1900. London : Thames & Hudson.

Abbado, A. (2017). Visual music masters : Abstract explorations: history and contemporary research. Milan : Skira.

Turkle, S. (2012). Alone Together. New York : Basic Books.